The Crisis Nobody's Talking About: Why Your Netflix Habit is Heating Up the Planet

Every time you stream a movie, ask an AI chatbot a question, or upload a photo to the cloud, you're participating in something most people don't even realize: a global energy crisis that's unfolding in real-time.

Here's the uncomfortable truth: data centers consume more electricity than entire countries. They're using so much power that communities surrounding these massive server farms are experiencing air pollution and water stress that affects their health and quality of life. And it's about to get dramatically worse.

AI is hungry—voraciously, insatiably hungry for power. When you use ChatGPT or generate an image with AI, you're not just using electricity. You're potentially draining the power grid of entire regions. By 2028, AI alone could consume as much electricity as 22% of all American households. Utilities are struggling to keep up. Power companies didn't build infrastructure for this. Communities are experiencing blackouts and rolling brownouts.

But what if there was another way? What if we could solve this problem by looking up instead of down?

That's the wild, audacious bet that a small group of entrepreneurs and tech visionaries are making right now. They're launching data centers into space.

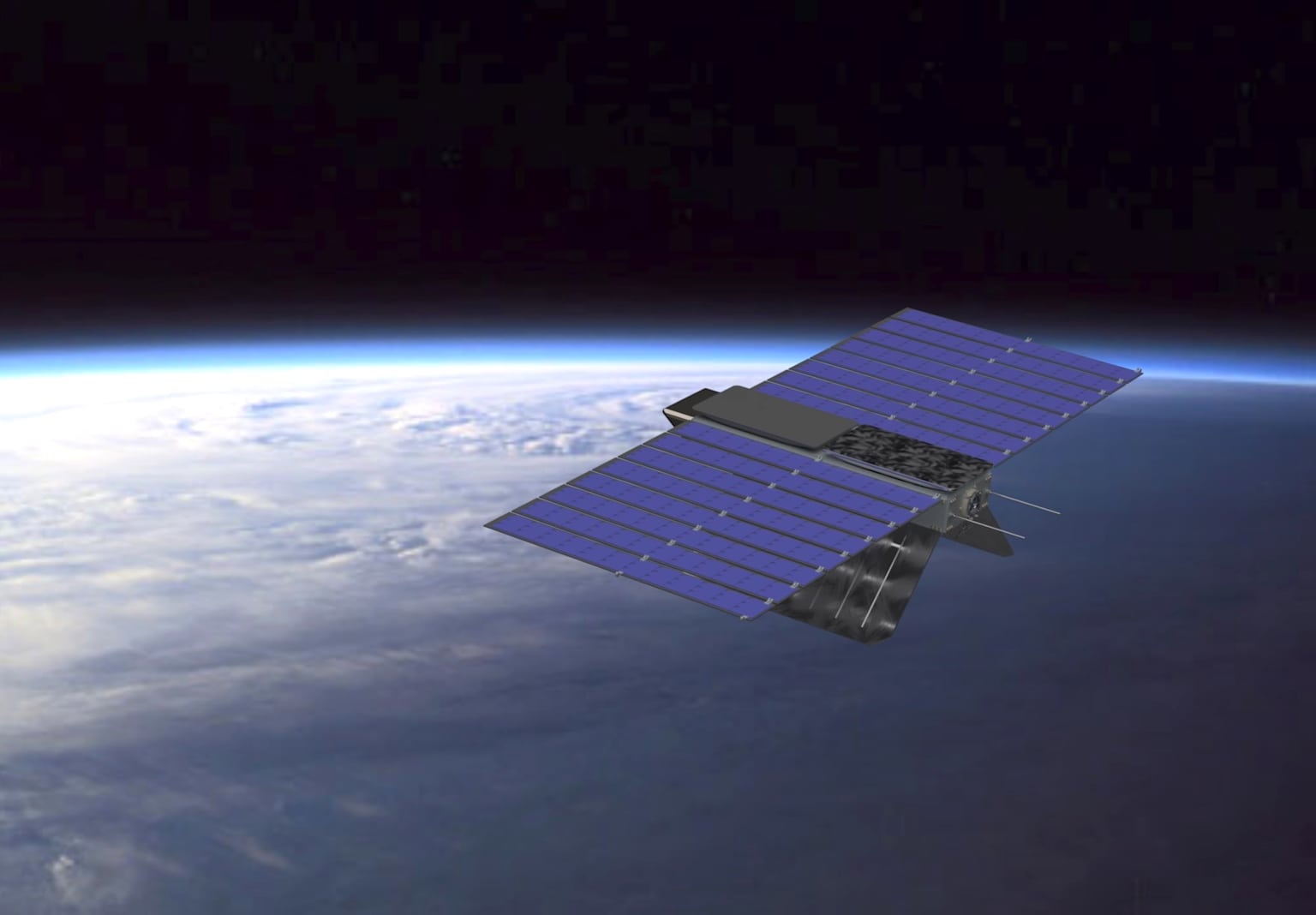

Rendering of an orbital satellite equipped with solar panels, symbolizing space-based data centers powered by sustainable energy for advanced AI operations

The Man Who Dared to Dream Beyond Earth: Philip Johnston's Obsession

Philip Johnston, the CEO and co-founder of Starcloud (formerly Lumen Orbit), is the kind of person who thinks about problems differently. While most people were comfortable with how data centers worked on Earth, Johnston became obsessed with a different question: What if we moved them to outer space?

It sounds insane. Building data centers in the harshest environment imaginable—where radiation zaps electronics, tiny debris travels at thousands of miles per second, and temperatures swing between scorching heat and absolute zero. But Johnston saw what others missed: space solves the two biggest problems with Earth-based data centers simultaneously—power and cooling.

On November 2, 2025, Johnston's vision became tangible. Starcloud launched the first data center-grade GPU into orbit, aboard a SpaceX Falcon 9 rocket. This wasn't just another satellite. This was the Starcloud-1 demonstrator, carrying an NVIDIA H100 GPU—the kind of chip that costs tens of thousands of dollars and powers the world's most advanced AI systems.

When that rocket lifted off from the launch pad, it carried the weight of Johnston's decade-long dream. For 11 months, Starcloud-1 will orbit Earth at 325 kilometers altitude, testing whether commercial AI hardware can actually survive and thrive in the brutal space environment.

"This is the first time anybody's tried to launch data center-grade terrestrial Earth-based GPUs into space," Johnston explained. The stakes couldn't be higher—if this fails, the entire orbital computing dream dies. If it succeeds, he's fundamentally rewritten the rules of computing.

Modern AI data center with server racks and a technician working on a laptop, illustrating the infrastructure powering advanced AI workloads

The Nightmare Scenario: When Your Digital Life Destroys Your Community

To understand why entrepreneurs like Johnston are willing to risk billions on space-based infrastructure, you need to understand what's happening on Earth right now.

In Mesa, Arizona, a region already struggling with water scarcity, massive data centers are consuming millions of gallons of freshwater daily to cool their servers. Local residents are watching their water bills skyrocket while their aquifers deplete. Meanwhile, communities near data centers report respiratory issues and health problems from air pollution.

In rural Iowa, where data centers have proliferated, the facilities consume massive amounts of electricity—so much that local power grids are stressed and sometimes fail. Residents never asked for this. They never voted on it. But they're bearing the environmental cost.

This is the hidden side of AI that tech companies don't advertise. When you ask ChatGPT to write a poem, someone in Arizona or Iowa pays the price through contaminated water or unreliable power grids.

Here's the math that keeps environmental scientists awake at night: Data centers currently account for 4.4% of all US electricity consumption. By 2030, this could triple to over 7.5%. A single hyperscale AI data center can consume as much power as a small city.

And it's not just electricity. Manufacturing the specialized chips and hardware requires extracting rare earth minerals in environmentally destructive ways. Once that equipment becomes obsolete—which happens quickly in the AI industry—it becomes toxic electronic waste containing lead, mercury, and other hazardous materials that contaminate soil and water.

Professor Mahmut Kandemir at Penn State, who has spent his career optimizing computer systems, recently shifted his focus to sustainability. "Without proper sustainability measures, the expansion of AI could accelerate ecological harm and worsen climate change," he warns. This isn't an opinion—it's a warning from someone who understands the technical depth of the problem.

The Space Solution: Where the Sun Never Sets and Heat Just Vanishes

So why is space different?

In orbit, the sun never sets. A satellite in the right orbital position experiences 24/7 continuous sunlight, unlike Earth-based solar panels that generate power half the day and then depend on battery storage or grid electricity at night. This means orbital data centers powered by solar arrays would operate on pure, unlimited, free renewable energy indefinitely.

But the real innovation is something most people don't think about: cooling.

Data center operators on Earth spend enormous resources—and enormous amounts of water—just to keep their servers from overheating. In space, there's no problem. The vacuum of space is an infinite heat sink at -270°C (absolute zero). Waste heat simply radiates away into the cosmos without needing water, cooling towers, or power-consuming air conditioning systems.

Think about what this means: You eliminate your biggest operating costs—electricity and water cooling—simultaneously. In orbital data centers, these costs essentially become zero once the facility is operating.

Starcloud's vision is staggeringly ambitious: 4-kilometer-square solar arrays producing 5 gigawatts of continuous power in space. To put this in perspective, that's comparable to what a large terrestrial data center complex produces—except this would be powered entirely by the sun with zero freshwater consumption and zero direct emissions.

Solar panels on the space station generate continuous solar power in orbit around Earth

Google's Moonshot: When the Almighty Tech Giant Joins the Space Race

If you needed any validation that this isn't fringe science fiction, consider what happened on November 3, 2025—just one day after Starcloud's launch.

Google announced Project Suncatcher.

Google, the company that runs virtually all AI inference workloads for millions of people every day, decided to put its own Tensor Processing Units (TPUs) into space. The company is building satellites with its proprietary AI chips and launching them by early 2027 in partnership with Planet Labs.

Why would Google do this? Because the company calculated that space-based AI infrastructure could become economically superior to Earth-based data centers by 2035. That's Google's assessment—one of the most carefully calculated companies in tech history.

Sundar Pichai, Google's CEO, didn't make a flashy announcement. But internally, Google assessed radiation tolerance. The company subjected its latest TPU chips to particle accelerators simulating LEO radiation exposure. The results shocked everyone: Google's chips withstood radiation doses nearly three times higher than expected without failing.

What does this mean? It means the technology works. It means Google—the company with virtually unlimited resources—looked at space data centers and said: "Yes, we're betting billions on this."

Axiom Space: The Pragmatic Path—Data Centers for the People Already in Space

While Starcloud and Google are building new infrastructure, Axiom Space is taking a more immediate approach: using the International Space Station.

By 2027, Axiom plans to deploy its Orbital Data Center Node (AxODC Node ISS) on the ISS—infrastructure that already exists, already orbits Earth, and is already powered and cooled. The company has been operating cloud computing capabilities on the space station since 2022, proving the concept works.

Jason Aspiotis, Axiom's global director of in-space data and security, is thinking practically about the future: "By 2027, we plan to have at least three ODC nodes, interconnected and interoperable with each other, providing services to any satellite and spacecraft with compatible communication terminals".

This isn't science fiction—it's happening now. Axiom is even using commercial off-the-shelf hardware: Phison's 122.88-terabyte SSDs (solid state drives) designed for enterprise data centers, hardened for space. The company is proving that you don't need specially built space hardware—you can take proven Earth technology, protect it from radiation, and fly it.

The Business Opportunity: When Solving Earth's Crisis Becomes Profitable

This is where the story gets interesting, because solving the AI energy crisis is also becoming a business opportunity.

Crusoe Energy, a company that specializes in placing compute infrastructure near unique energy sources (like geothermal sites or oil fields), has partnered with Starcloud. The company announced it will become "the first public cloud operator to run workloads in outer space".

What does this mean in practical terms? By early 2027, you might be able to rent computing power from space-based data centers just like you currently rent from Amazon Web Services or Microsoft Azure. For companies with massive AI workloads, operating training jobs from orbit could cut their electricity costs by a factor of 10.

The market opportunity is enormous. Investment firms project the in-orbit data center market will grow from $1.77 billion in 2029 to $39.09 billion by 2035—a 67.4% compound annual growth rate. This isn't speculative venture capital nonsense—these are serious projections from investment banks like Morgan Stanley and Goldman Sachs.

Cully Cavness, Crusoe's co-founder, put it simply: "By partnering with Starcloud, we will extend our energy-first approach from Earth to the next frontier: outer space".

The Real People: Who This Actually Affects

Let's step back from the technology for a moment and talk about what this actually means for real people.

Climate scientists and environmental engineers are watching this closely because they understand the alternative: if we don't solve the AI energy crisis, we're looking at a climate disaster. Data centers already contribute 1-2% of global greenhouse gas emissions. If AI continues to multiply demand without solutions, that number could reach 10-15% by 2030.

Communities in Arizona, Iowa, and Texas—where data centers have proliferated—are hoping solutions like orbital infrastructure will take some pressure off their local power grids and water supplies. For them, this isn't theoretical. It's about whether their kids can have water to drink and whether the power grid stays stable.

Workers in the tech industry are watching because their industry has created a genuine problem—and now they're trying to solve it responsibly. Engineers at Starcloud, Google, and Axiom are tackling genuine technical challenges: radiation hardening, thermal management, optical communications at vast distances.

Young entrepreneurs and students are watching because orbital computing represents a genuine frontier—a place where innovation can still change the world and address real problems.

And everyday users of AI and cloud services are (unknowingly) watching because the question of whether their digital habits are sustainable is being answered in real-time.

The Challenges Are Real (And They Matter)

To be honest, this isn't a guaranteed solution. There are real, serious obstacles.

Cosmic radiation is a genuine threat. Cosmic rays can corrupt data and damage electronics. Starcloud-1's 11-month mission will be the first real test of how well commercial data center chips handle this. If the radiation damage is worse than expected, the entire premise collapses.

Space debris is another serious concern. Tiny fragments of defunct satellites and rocket stages travel at speeds of over 27,000 kilometers per hour. A collision with a large structure could be catastrophic. Starcloud's planned 4-kilometer solar arrays present an enormous target.

Thermal management without water cooling is hard. Heat pipes, phase-change materials, and radiators must be engineered precisely. One misstep and the entire facility overheats and stops working.

Launch costs, while dropping, are still astronomical. Even with SpaceX's Falcon 9 at $6,500 per kilogram, putting multi-ton data center infrastructure in orbit isn't cheap. The economics only work if launch costs continue plummeting.

Philip Johnston at Starcloud is betting on Starship—SpaceX's next-generation rocket currently in development. If Starship achieves its promised $66.67 per kilogram to orbit, the business case becomes ironclad. If it doesn't, orbital data centers might remain a niche technology.

The Environmental Math: Does Space Actually Help?

Here's the question everyone should ask: Is launching rockets into space actually better for the environment than keeping data centers on Earth?

The answer, according to research, is a qualified yes—but with conditions.

A single Falcon 9 launch emits approximately 400-550 tons of CO2—equivalent to about 80-110 average American cars driving for one year. That sounds bad until you do the math: a solar-powered orbital data center operating for five years eliminates its launch carbon footprint and then produces zero-emission computing indefinitely.

Compare this to a terrestrial AI data center running for five years on the US electrical grid, which has 48% higher carbon intensity than the national average due to concentration in regions powered by coal and natural gas. The terrestrial facility would emit orders of magnitude more CO2 over the same five-year period.

The environmental case for orbital data centers actually gets stronger as time goes on. After five years, every year of operation further reduces the carbon payback period.

The Timeline: When Will This Actually Happen?

This isn't happening tomorrow, but it's happening faster than most people realize:

Right now (November 2025): Starcloud-1 is orbiting Earth with the first commercial-grade GPU in space. Google is preparing TPU-equipped satellites. Axiom is building the ISS data center node.

2026-2027: Starcloud launches a significantly more powerful satellite with NVIDIA's Blackwell architecture. Google's first TPU satellites launch. Axiom deploys the initial ODC nodes on the ISS. Crusoe begins offering limited cloud computing from space.

2027-2030: Multiple companies launch pilot constellations. The market begins establishing pricing models. The first commercial customers run real AI workloads from orbit.

2030-2035: Starship launches make massive orbital platforms economical. The in-orbit data center market reaches $39 billion. Some of the world's new data center capacity is being built in space rather than on Earth.

2035-2040: Starcloud and competitors operate multi-gigawatt orbital facilities. Space-based computing becomes economically competitive with or superior to Earth-based alternatives.

This isn't speculation. These are funded companies with rockets booked and hardware in development.

What This Means for You

If you're reading this as an American who cares about technology, sustainability, or the future:

You're living through a technological inflection point. The question of how humanity powers computation is being answered in real-time by ambitious entrepreneurs who refuse to accept the status quo.

If you use AI tools, stream video, or rely on cloud services, you're part of the problem driving this innovation. And if orbital data centers succeed, you might also be part of the solution—using computational services powered entirely by clean, renewable, unlimited solar energy in space.

If you live near a data center in Arizona, Iowa, or Texas, this innovation might relieve pressure on your local water and power resources. That's not theoretical—that's your daily life potentially improving.

If you're an entrepreneur or engineer, this represents a genuine frontier. Companies like Starcloud are hiring people who want to build infrastructure for the next generation of computing. The problems are hard, the stakes are high, and the opportunity to make an impact is real.

If you care about climate change, here's hope: a group of people refuse to accept that AI's energy crisis is unsolvable. They're looking beyond terrestrial constraints and imagining solutions that seem impossible until they work.

Conceptual illustration of Earth encircled by a network of satellites and a futuristic space station symbolizing orbital data centers in space

The Bigger Picture: Why This Moment Matters

We're at a crossroads. AI is consuming energy at unsustainable rates. Data centers are depleting water supplies and straining power grids. Communities are suffering. But instead of accepting this as inevitable, some of the brightest engineers and entrepreneurs in the world are asking: "What if we solved this completely differently?"

That question has led to orbital data centers—satellites with GPUs, solar arrays in space, and heat radiating into the cosmic void.

It's audacious. It's risky. It might fail. But if it succeeds, it fundamentally solves one of the defining challenges of the AI era: How do we power the future without destroying the present?

The answer, it turns out, might be literally looking up at the stars.

Key Takeaways for Readers:

AI is consuming energy unsustainably: Data centers could use 8% of all US electricity by 2030, straining power grids and depleting water supplies in vulnerable communities

Space offers a genuine solution: 24/7 solar energy, passive cooling into the vacuum, and zero water consumption make orbital data centers economically and environmentally viable

This is happening now, not someday: Starcloud has launched hardware. Google has announced plans. Companies are hiring. The future is being built

You're part of this story: Your use of AI and cloud services is driving the innovation, and the solutions being built will potentially power your digital life in the future